-

Posts

85 -

Joined

-

Last visited

Content Type

Profiles

Forums

Events

Articles

Marionette

Store

Everything posted by jmanganelli

-

Looking for license to purchase..

jmanganelli replied to N.D's topic in Buying and Selling Vectorworks Licenses

Hi ND, I direct messaged you last week but I guess you did not get it. Here is my response: I am willing to sell my 2021 Vectorworks Architect license to you. I am on the Vectorworks Subscription Service (VSS) and I just paid up for the coming year a few weeks ago, so I believe that you should receive the upgrade to Vectorworks Architect 2022 and all other updates for the next 12 months at no additional charge. As far as price, I'll give you ~31% off list price, or $3,045 USD * (0.70) = $2,100 USD. -

Has anyone looked at: https://www.virtuosity.com/product/itwin-design-review-ad-hoc-workflow/ Plus: https://www.virtuosity.com/product/projectwise-explorer-virtuoso-subscription/ I am intrigued. I will test Bentley's ProjectWise + iTwin tools out with Vectorworks at some point in the next couple months. Bentley makes great tools overall. The cost and small user community and limited training materials have been concerns for me. It appears that through this virtuosity platform Bentley is attempting to ameliorate these roadblocks to small firm adoption.

-

VECTORWORKS CLOUD SERVICES: Interoperability, Collaboration, Common Data Environments (CDEs), Digital Twins, and Performance Simulation are implicit subthemes in many of the road map features listed. Really, all of these subthemes are vestiges of Cloud Computing. Vectorworks offers cloud computing services and integrations and/or interoperability with collaboration platforms and tools, common data environments, digital twins, and performance simulation tools (including arch viz, energy analysis, crowd flow, lighting effects, structural analysis, and lighting control systems logic). Please consider making Vectorworks Cloud Services a major theme of the road map. Please address more explicitly the roadmaps for the subthemes of the Cloud Services theme listed above. The post about Omniverse is interesting in part because: there are many ways to participate in NVidia's Omniverse, including as simply as export/import of standard file types all the way to bi-directional real-time integration; the partnering companies range from small specialty software companies to very large multinational companies; the partnering companies come from a wide range of industries, and so participation is about more than just participation in another competing AEC CDE upstart, but rather participation is about access to and collaboration with potential clients, collaborators, and vendors from across a wide range of industries; ultimately it does not matter if Omniverse or the Microsoft Cloud or the Oracle Cloud or the Amazon Cloud or any of the other many, many cloud vendors achieves a network effect, or if conversely, as the name omniverse suggests, there are many, many unique compositions of public and private clouds across a wide range of scales and capacities that are all reliant on the same underlying server and computing architectures. Rather the big point is that participation in project delivery and participation in commerce and civil society is already in large part participation in a number of distinct and integrated cloud computing ecosystems and this new dynamic will only continue to grow as a business concern. So part of the roadmap should be how the Vectorworks tools and platform engages cloud computing concerns and workflows. SECURITY: Assuming that almost all of Vectorworks' users already have to execute projects across a range of cloud services, and that the percentage of Vectorworks users whose project delivery is dependent on cloud services will only continue to grow, please also consider making Security a major theme of the feature roadmap. This can include: security of the program system architecture, security of file and data transfer to and from the Vectorworks Cloud Services and other cloud services, security as part of user workflows (especially collaborative workflows), and security as it relates to the Community Board and Vectorworks' other social media platforms (given the increasing prevalence of social engineering-based phishing and spear phishing facilitated by mining social media platforms and forums). Please consider adding a Security theme to the roadmap. INTERCONNECTED SYSTEMS OF SYSTEMS: Internet of Things, (IoT), (Industrial Internet of Things (IIoT), Cyber-Physical Systems (CPS), Ultra-Large-Scale Systems (ULS or ULSS), Complex, Large, Integrated, Open Systems (CLIOS), Intelligent Buildings, Interactive Architecture, Complex, Interactive Architectural Ecosystems (CIAE), Socio-Technical Systems (STS), Social-Cyber-Physical Systems (SCPS), Ubiquitous Computing, Augmented Reality, Virtual Reality...... and beyond (But let's say Interconnected Systems of Systems as shorthand for these concerns). There are so many ways in which the focus of our design activities engages the design of interconnected systems of systems. Vectorworks' own ConnectCAD and pioneering efforts developing the GDTF file format and participation in OpenBIM standards development already engage this set of concerns. The pandemic accelerated the adoption of interconnected systems of systems technologies, and now these are becoming normal project requirements for the design of any business, retail environment, home, leisure/hospitality environment, entertainment environment, public environment, healthcare environment, outdoor environment, educational, environment .... any/all environments.....any most large-scale organized, social activities. Please consider adding an Interconnected Systems of Systems theme to the roadmap. This can track how the Vectorworks software features enable designers to address these emerging project requirements. ETHICS: Whether it is Corporate Social Responsibility (CSR), Environmental, Social, and Corporate Governance (ESG), or the various 'World's Most...' ethical/just/... companies lists, or the 2030 challenge and other similar pledges and activities, companies are increasingly judged and valued not just based on their technologies and growth forecasts and balance sheets but on how they conduct business and how their products and services enable (or impede) how ethically their clients and users conduct business and how ethical their consultants and vendors are judged to be. In addition, since so many emerging technologies and work practices exist partially or completely beyond the bounds of existing legal and regulatory frameworks, to a certain degree, companies and service providers and consultants and vendors who enable use of emerging technologies are on the honor system to do what is right and just and ethical, regardless of whether or not there is a legal or regulatory mechanism in place to enforce compliance (e.g., RFID tags, drones, exoskeletons, computer vision, robotics, mechatronics, automation, and motion capture technologies on construction sites and IoT equipment in homes and commercial, institutional, entertainment, and business facilities). Given this, the topic of ethics is increasingly a major point of concern for clients, collaborators, and end users. In addition, and specifically with regard to Vectorworks' user base, design is fundamentally an ethical act. What we design and how we implement it fundamentally impacts people's health, wellbeing, performance, behaviors, comfort, and perception of the world. Ethics is a primary concern for all designers. So it makes sense that Ethics is a considered dimension of features enabled by the tools that we use. Please consider adding an Ethics theme to the roadmap. (Note: The terminology is not my focus. Call it something different if more appropriate. But please consider adding themes to the roadmap that address the concerns described above.)

-

I agree that participating in Omniverse is important. It is not only the large BIM authoring tool vendors on this platform. Many of Vectorworks' partners are engaging this platform. And, given that so many industries, and especially AEC and big data / data science, have come to rely on NVidia architecture as fundamental to their production, collaboration, and analysis tools, more likely than not this initiative will have staying power.

-

The uncertain future of Architectural Software

jmanganelli replied to Jonnoxx's topic in Architecture

Have you tried bluebeam studio? I’ve done a lot of markups in it with two different companies. It’s great -

The uncertain future of Architectural Software

jmanganelli replied to Jonnoxx's topic in Architecture

Have you looked at Bentley imodel? what about a 3D pdf? or the vw viewer -

The uncertain future of Architectural Software

jmanganelli replied to Jonnoxx's topic in Architecture

@Art V Seps2Bim is a space and equipment planning system that geolocates geometry --- anything. It could be used for BIM but it could be used for a lot of things other than BIM. Also very cool that the developers have done extensive implementation with the Veterans Affairs Administration, so the technology and workflow are well developed and tested. The BIMSTORM information is kept in regular spreadsheets and databases, in some cases. Very flexible. Again, beyond BIM. These can also be used for asset management, operations and maintenance, and other usages. -

The uncertain future of Architectural Software

jmanganelli replied to Jonnoxx's topic in Architecture

Look at the bimstorm and seps2bim links above. Great work. They have made non proprietary, non-tool-specific ways to store project info and use them to populate bim and cad models with platform-independent project information. -

The uncertain future of Architectural Software

jmanganelli replied to Jonnoxx's topic in Architecture

@Diamond strongly suggest reading schon’s book -

The uncertain future of Architectural Software

jmanganelli replied to Jonnoxx's topic in Architecture

@Jonnoxx thank you for this very thoughtful post. I appreciate the careful thought. I am adding some points and elaborations: Look at what Siemens, Dassault Systemes, and Intergraph/Hexagon are doing, and also Bentley. They have more mature visions and more advanced methods and tools. Who knows if it will be Microsoft, Google, Apple, or some other tech giant who takes the AEC industry. Any of them could buy most of the major BIM authoring tool vendors outright with only a fraction of their free cash. I don't imagine there is much interest in doing so though. I could see them buying Fluor, Jacobs, Stantec, HOK, or any of the major AEC/EPC firms instead, as well as Siemens, Schneider Electric, Rockwell Automation, Honeywell, and Johnson Controls. They don't need legacy code. They have software development domain knowledge, systems engineering domain knowledge, and vast user data. They need design, construction, and operations domain knowledge and they need facilities operations data. I don't think they will have an interest in BIM authoring tool vendors or the BIM authoring tool market other than perhaps as an aquihire opportunity. I think that the big tech companies want to own connected environments, smart buildings, smart cities, smart transportation, etc., and to own the smart buildings/cities domains they need to understand and ultimately optimize and manage how buildings and communities are designed, constructed, and maintained. The BIM tool is among the least interesting and least useful technologies for understanding how to design and build buildings and environments as part of smart, connected systems of systems because the way major BIM authoring tool vendors seem to develop their tools, they seem to view the data capture and analysis they do through the traditional lenses of their industries and roles as opposed to through the lens of making tools to facilitate the creation of smart, connected systems. It would be more useful for the tech giants to buy the 10 biggest AEC/EPC firms, as mentioned above, and allow them to keep running as normal for years, gradually introduce smart systems requirements, and to mine and collect all of the data associated with design, construction, and operations, as well as monitor, collect, and analyze every email, meeting, phone call, drawing, and keystroke of the subject matter experts who design, build, and operate built environment systems of systems. This is how you map the entire facilities/environment/operations ecosystem. At the same time, on the back end, you have machine learning analyzing all of that data and figuring out how to do it all better and more efficiently and in a way that will have clients clamoring for your facilities and environments, in which, in turn, their data is also mined and operationalized. Perhaps occasionally, if they see an opportunity, based on their data mining and analysis, they may suggest methodological improvements to the AEC/EPC firms, but mostly we would be the study subjects for their systems analyses. Look beyond the AEC industries' inward focus (its somewhat idiosyncratic focus). Look at aerospace, defense, computer science, automotive, energy, telecommunications, and industrial scale software industries. You'll find that there is something like a convergence or agreement on a number of methods and tools for systems of systems development and optimization that are superior, mostly, to the methods and tools found in the AEC industries. There are somewhat standard formalisms for systems of systems representation, requirements elicitation/development/validation, analyses, optimization, and operations. There are well developed methods of co-simulation between software systems, hardware systems, hardware-software systems, as well as hardware-in-the-loop, software-in-the-loop, and human-in-the-loop co-simulations for systems of systems development. There are well-established paradigms for emerging project types, including cyber-physical systems, socio-technical systems, ultra-large scale systems, multi-scale systems, and complex, large, integrated, open systems. Siemens and Dassault Systemes are keyed in on these constructs and methods and their tools reflect it. The AEC industry is years to decades behind in recognizing and adapting this knowledge and these methods and tools. Why does all of this matter? Sit back and look around you. Look at your computer, you monitor, your smart phone, perhaps your smart watch, your tablet, perhaps your wearable technology, your car made in the last 25 years that is as much or more computer and network data node than car, perhaps, your cloud systems, your Alexa or NEST or Ring or other home automation systems, your security and access control systems, and also the transportation, distribution, and telecommunications systems of systems upon which you rely. Our digitally augmented world is designed, made, optimized, and operated through the constructs, measures, methods, and tools that I am referencing above, widely utilized in all other major capital-intensive, mission-critical industries --- with the surprisingly notable exception of buildings and the built environment. And yet, it is inevitable that these same constructs, measures, methods, and tools will be applied to the built environment because: (1) they can be --- the built environment is designable, constructable, and operable using the same methods, as are all complex, dynamical systems of systems --- and; (2) because there's so much profit to be made and control to be gained from fully connecting the built environment for commercial and governmental actors that they are compelled to turn the built environment into an extension of the rest of our digitally enhanced society; (3) these technologies and methods and tools are already being applied to the built environment through control systems and building automation systems mentioned above, and so there is a natural and easy path for reaching into the AEC industry that is at least somewhat familiar to the tech giants. If you go down the bunny holes implied in the content referenced above, you'll find that as far as systems geometry authoring and simulation platforms go, Daussault Systemes and Siemens have tools that are much more tailored to designing complex systems of systems than any BIM authoring tool vendors' tools. As an example, Dassault Systemes Catia platform includes components for overlaying systems engineering methods and performing hardware/software/human co-simulations. Siemens Tecnomatix line in conjunction with their NX line allows for robust modeling and simulation of human-environment interactions and design. The problem though is that these platforms are very expensive. In 2010 I got a software grant from Siemens to use their NX MCAD tool in conjunction with their Tecnomatix Process Simulate Human tools in order to do research on bringing systems engineering design and analysis methods as one may find in aerospace or defense into the AEC industry to improve the AEC industries' ability to execute model-based, evidence-based design. The software tools that Siemens let me use for that project were valued at in excess of $500,000. I don't know what a full suite of Dassault Systemes modeling, systems engineering, and co-simulation tools would cost, but as I have been told and read, it would also be 3-20 times as much as what AEC professionals typically pay for BIM authoring tools. So there is a fundamental barrier to using these superior systems design and analysis tools in our industry as our industry is not set up to afford to do this level of modeling and analysis. Beyond this, of course, our industry would also have to start integrating data scientists, human factors engineers, systems engineers, and computer scientists as standard parts of design teams. This is done to a limited extend in the heavy industrial, military, event, and healthcare subsectors of the AEC industry, but is not wide spread, and would also entail significant additional cost. Bringing this all back to Vectorworks, one of the reasons that I like vectorworks is that it is a fundamentally very sound modeling and drawing tool with great representational ability, a solid internal data management system, that can handle big data sets well and is relatively inexpensive, at least in the US market compared to its competitors. From my perspective, Rhino and Vectorworks are similar in that they offer tremendous value for the cost and are very efficient for the most basic and fundamental tasks, that we will always need to do, even if they don't offer all of the bells and whistles of some of the more expensive packages. But, given my comments above, it should be clear that I believe that the priorities and foci of the more expensive BIM authoring packages either suffer from a poor vision and alignment with where our industry is headed (i.e., how to model/simulate smart/connected environments) or suffer from an inability to deliver the features and functionality in an affordable way. I should also say that with Vectorworks' focus and leadership on tools like Spotlight and ConnectCAD, in a low-key, low-cost kind of way, they are actually better prepared for a transition to the methods, tools, and workflows needed to design smart, connected environments than any, or at least most, of the other BIM authoring tool vendors currently appear to be. Combine this with Vectorworks' strategic relationship with Siemens, and Vectorworks' ability to handle big data sets, its database technology, and its general user-friendly modeling, drawing, and graphics capabilities, and I think that if Vectorworks plays its hand well, it could leapfrog the other BIM authoring vendors and gain market dominance. But as per the comment about complexity below, there are too many factors to know what will play out or how, and I do not work for any of these companies or know what they have in the pipelines. These are merely my observations based on my experience and what information is publicly available right now. In case you are interested, look at the NIST Cyber Physical Systems Testbed and start to think about how this will integrate with AEC industry BIM authoring tools and project management tools. This will give you a sense of how our industries' methods and tools have to evolve to participate in the connected future of the built environment. Or look at IES' ICL --- also heading in the right direction. Also look at Matlab/Simulink, and how energy analysis software like OpenStudio can be integrated with Modelica to perform systems of systems analyses. In case you're interested, take the concept of a digital twin as presented typically and set it aside. A geometry model as a data model is not necessarily a particularly interesting or useful data model. An equation is a data model. A story of any kind, for instance, a children's book, is a data model. A spreadsheet is a data model. Building design, construction, and operations have been represented by data models since people sketched out plans in sand with sticks. Building design, construction, and operations have been represented by digital data models since plant, railroad, and utility operators in the late 19th century first mapped out electricity distribution networks with wall-sized painted diagrams that had indicator lights located at key points to indicate the status of system functionality. A digital twin is also somewhat useless as a concept because there is a supposition in almost all, if not all discussion that I ever see about digital twins that they are in fact high-fidelity digital representations of actual physical and logical systems. But this supposition fails to take into account system complexity, components in the model that may be poorly represented or missing, and rate of change of system states (especially changes that occur outside of the designed performance envelope). Once a system gets so complex, when all of the little assumptions and estimates and unknowns and fudge factors start to add up and compound upon each other, and once the system experiences a series of external, unplanned for, traumatic events (like lightning strike, earthquake, flood, hurricane, tornado, fire, systematic and chronic misuse, etc.), the systems' behavior and structure become somewhat unpredictable, either temporarily or permanently. This is a mathematical and scientific truth. Complex, dynamical systems (i.e., complex systems whose states change over time) are only modelable and predictable when the system occupies something like a natural harmonic frequency, an equilibrium state (that may be far from entropy, i.e., is highly structured). When the system falls out of such an equilibrium state or harmonic frequency, its behavior can become fundamentally chaotic and unpredictable. Once the model loses fidelity, structure, and stability, how do we get it back in sync with reality? Maybe its easy. Maybe its impossible. Maybe getting it back in sync can be approximated but at the cost of a further loss of fidelity and an increased likelihood of wandering into chaotic states in the future. The buildings that we design are complex, dynamical systems. They wander in and out of states of structured, stable, predictable behavior and chaotic, unpredictable behavior throughout their lifecycles. A digital twin can be made and it may even be mostly accurate, much of the time, especially if system states and variables and contextual conditions are kept within limited performance envelopes, but digital twins can never be completely accurate or stable representations of the actual building performance and they cannot always accurately predict conditions and future states of the buildings' assets. There's a great book that explains this issue of the limits of predicting complexity by using a pool table analogy. Here's the key quote from the book: "If you know a set of basic parameters concerning the ball at rest, you can compute the resistance of the table (quite elementary), and can gauge the strength of the impact, then it is rather easy to predict what would happen at the first hit. The second impact becomes more complicated, but possible; and more precision is called for. The problem is that to correctly compute the ninth impact, you need to take account the gravitational pull of someone standing next to the table (modestly, Berry’s computations use a weight of less than 150 pounds). And to compute the fifty-sixth impact, every single elementary particle in the universe needs to be present in your assumptions! An electron at the edge of the universe, separated from us by 10 billion light-years, must figure in the calculations, since it exerts a meaningful effect on the outcome." (p. 178) If this is true for trying to predict the paths of pool balls on a pool table, then how much more is it true of the way that buildings (and by extension digital twins) function! In case you're interested, all is not lost for architects, engineers, and contractors. There is a well established way in which AEC professionals maintain relevance. It is rooted in our history. You see, over time, AEC professionals figured out something fundamental about how to design, construct, and operate complex, dynamical systems. Rather than trying to model and manage the complexity and dynamicism, eliminate it instead. Make the building as simple as possible but no simpler. AEC professionals figured something else out, too, as codified in Don Schon's great book, The Reflective Practitioner. AEC professionals design systems of systems unlike any other industry, and there is a wisdom in our creative madness. What Schon calls reflective practice is like an early, bespoke version of agent-based modeling that we naturally developed in the AEC industry. The idea is that as opposed to trying to capture and manage all of the possible variables and permutations of a system of systems, start with some big concepts, simply defined, not too many, get them all aligned and working in something like a cohesive whole by imagining and playing out what-if scenarios and iterating, and then the rest will fall into place through iterative refinement, even though you may never be fully aware of the full system of systems or understand it. This practice will remain valid and is very powerful. It is also very efficient and effective. There was a study out of CIFE about 8 years ago that compared a group of seasoned AEC veterans scoping a project to a machine learning algorithm scoping the project. The machine learning algorithm produced better results than the team of human experts. But, the results of the algorithm were not that much better and it ran for a lot more hours than the SMEs spent conceiving of the project. The point is that while overall the smart tech produced a superior result, the humans were surprisingly competitive and efficient in that they produced a pretty similar result by spending a lot fewer hours analyzing the project needs and logistics. So again, there is a place for AEC SMEs. We are not irrelevant now nor will we be for a long time, if ever. Rather, the best outcome is when that deep and efficient human expertise is supercharged with just enough machine learning. In case you are interested, also look into SEPS2BIM and BIMStorm. This is a great effort. They have worked on BIM-authoring tool agnostic building systems and component representations that can be loaded into any BIM authoring tool. Also, with SEPS2BIM, they've geotagged all components, so that everything is listed in a database as existing in a specific location in the world. -

Yeah, when I got vectorworks architect two years ago, graphisoft quoted me a price for archicad that was about exactly twice what it cost to buy vectorworks architect, and revit’s and open buildings’ prices were similar to archicad’s price, and I did not feel that archicad, revit, or open buildings offered enough additional features and functionality and efficiency benefits compared to vectorworks architect to justify their price premiums. if I were in a country where vectorworks architect cost twice as much as its competitors, I would have made a different decision.

-

I guess since DBrown indicated that he pays about 1/2 for archicad in Argentina compared to what I was quoted in the us, and since others are indicating that archicad can cost even more in the eu, this suggests that graphisoft charges people 2-3 times as much for the same product in some places compared to other places. Is this correct? Even if vectorworks varies price by country when optimizing localized versions, is vectorworks charging 2-3 times the price in some places compared to other places? Does it cost that much to make a localized version? If yes, I just had no idea that the localization cost was so much. I was aware of variations based upon exchange rates of currencies. the outsourced localization premium makes sense. but does it explain why archicad was 50% cheaper for dbrown in Argentina than what it costs in the USA?

-

I have two 32” 4K philips monitors that I got in January. They were about $300 each. Great way to work. I still also work on a 14” monitor, a 15” monitor, a 27” monitor, or two ancient 19” monitors, and I definitely miss the two big 4K monitors when I have to work on the smaller monitors. 32”, 4K monitor is a nice size if you use half sized drawing sets a lot because you can proof a complete, half size sheet of arch d or arch e1 size drawing at 1 to 1 scale on the screen to see how the sheets will look once plotted.

-

Hidden Line View - Revit, Archicad & Vectorworks

jmanganelli replied to KrisM's topic in General Discussion

@KrisM, as i said, I only experience a very minor (< 2 seconds) slowdown in hidden line rendering in VA 2021, and I only experience that slight delay when first switching to hidden line rendering --- not while in continuous use of hidden line rendering --- and this is on a two year old, middle of the road laptop/tablet hybrid. I experience no slow down when rendering on a true workstation. This is why I asked about your settings, OS, and computer hardware. The performance problem may not be with Vectorworks. -

This sounds like a perfect application for marionette.

-

Hidden Line View - Revit, Archicad & Vectorworks

jmanganelli replied to KrisM's topic in General Discussion

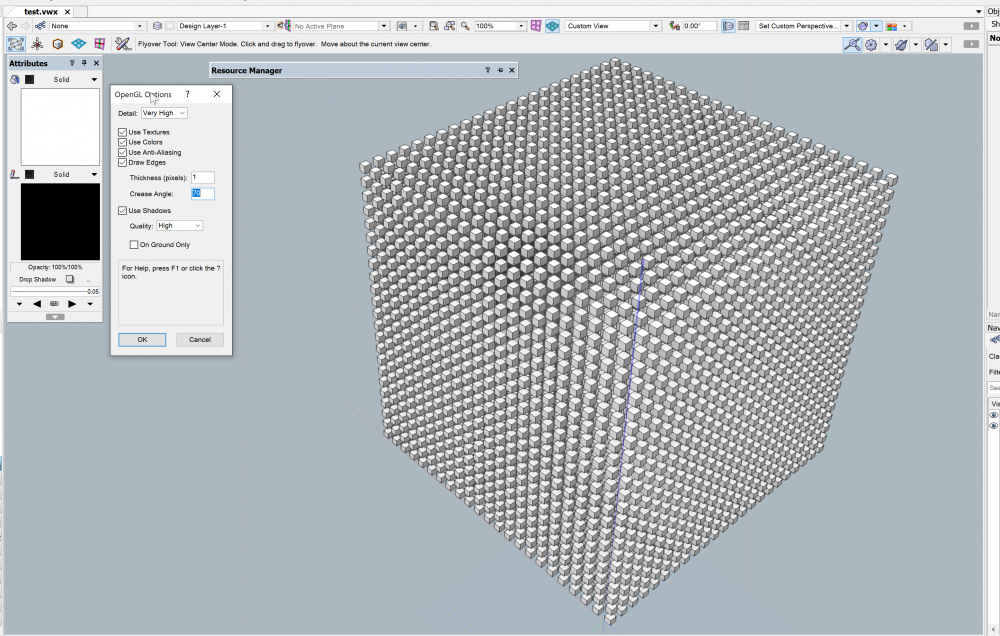

@KrisM What are the hidden line render, lighting, and opengl settings in vectorworks? And what are they in Revit? What are the system specifications? What is the OS? How big is the model? As a point of reference, I just modeled 12,167 1'x1' cubes spaced 2' apart in vectorworks architect 2021 and set the opengl settings to their highest quality options for geometry and shadows and also changed the lighting settings to be exterior lighting with ambient occlusion (50%) turned on and an occlusion distance of 2'-0" with light intensity at 6500k. I am using a two year old HP zbook x2 g4 (a windows workstation tablet) using the latest version of Windows 10, and with a dual core i7-7600U processor, 32 GB of RAM, a SSD, and a Nvidia Quadro M620 GPU with 2 GB of VRAM on a 4k dream color screen with dream color enabled. As far as laptop computers go, it is middle of the road to low end on the specs, and especially weak on the VRAM, even though it is good for a tablet. I don't have any lag in opengl with these settings and only a tiny bit of lag in hidden line rendering mode when I first transition to hidden line render mode. On my full workstation, which has a ryzen 9 3900x CPU and RTX 2080 ultra GPU with 8 GB of VRAM, I see no lag at all even in the render mode. -

I have direct experience with Revit, AECOsim (now OpenBuildings), and Vectorworks. Revit has major strengths and surprisingly serious weaknesses that are absolute productivity killers, OpenBuildings has major strengths and surprisingly serious weaknesses that are absolute productivity killers, and I'm sure that ArchiCAD does as well, as is indicated by their forum having long threads with titles like, 'archicad is dying' and 'things broken in archicad 23' and too many other threads to mention. They are all impressive in one way or another but they are also all imperfect. Vectorworks also has major strengths and some surprisingly serious weaknesses that are absolute productivity killers. My observation is that people in the thread keep threatening to cut their service select membership or switch to another BIM authoring tool as a reaction to frustration with Vectorworks' development speed. Why? I think the frustration is better channeled into constructive, collegial criticism. There is no demonstrably better BIM authoring tool at any price. There are only a long set of trade-offs to be considered. I guess the grass always seems greener on the other side of the fence. My point is that if you work in the other tools, too, you will know that none of them stand out as head and shoulders better or worse than the others at this time. Each is modestly better and worse in different ways. As the song goes, 'if you can't be with the one you love, love the one you're with..." In the context of this thread, I think that 'the one you love' is a fictitious fantasy, and the ones you can be with all fall short of that fantasy...but that doesn't mean that they're without value or are poor tools...perhaps it just means that expectations have to be adjusted.

- 111 replies

-

- 10

-

-

Does any vendor have a great stair tool? My experience with revit and aecosim and autocad is that none of their stair tools are great for anything other than the most basic stairs. I don’t know about archicad personally. But I know that archicad users still complain about the archicad stair tool a lot on their forums, even after it was revamped. I don’t see how vectorworks not being able to do something that none of its competitors have done should be viewed as a failure by vectorworks.

-

It must be priced very differently country to country? I did not know that. did graphisoft cut their prices drastically or does this mean that they charge way more in the us than they do elsewhere? when I was deciding between archicad and vectorworks architect a few years ago, the price I was quoted was closer to $5000 for the archicad license and then the maintenance on top of that was closer to $1000. Did that change?

-

I find the import/export excel features very useful. I think that Vectorworks is advancing their tools as well or better than any of the vendors. Read some of the other vendor tool forums. Price does factor, too. Vectorworks Architect is at least 40%-60% less than the leading competitors. Do we all want to kick in the extra 40%-60% so that Vectorworks can greatly accelerate development? If not, then why complain? For what Vectorworks charges, its capabilities and pace of development more than hold their own against the competition.

-

Do you manage to work with multiple view panes?

jmanganelli replied to line-weight's topic in General Discussion

@Taproot Is it possible that the performance issue is gpu related? I’ve never had a problem with multiple viewports or floating panes on two monitors with a gtx 980 8gb gpu or a rtx 2080 8gb gpu but I have had a lag issue and render issues similar to what you describe on a 2gb quadro gpu.